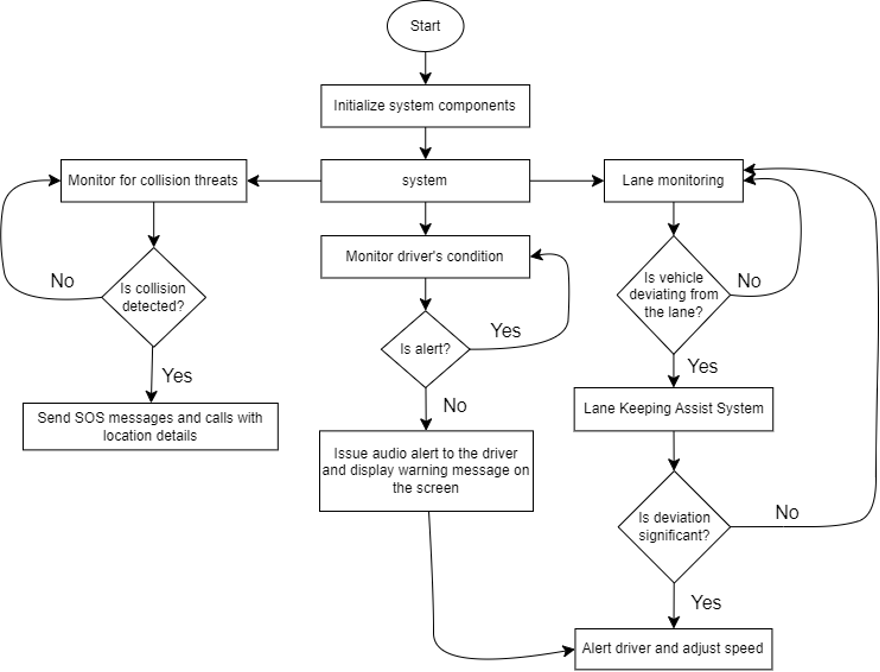

Road safety is one of the leading causes of injury and death globally. This project addresses it by building a complete Advanced Driver Assistance System (ADAS) that monitors both the road and the driver simultaneously — alerting in real-time and even taking corrective action when needed.

Six integrated safety components

Two points of view

The system operates with two independent camera perspectives running concurrently:

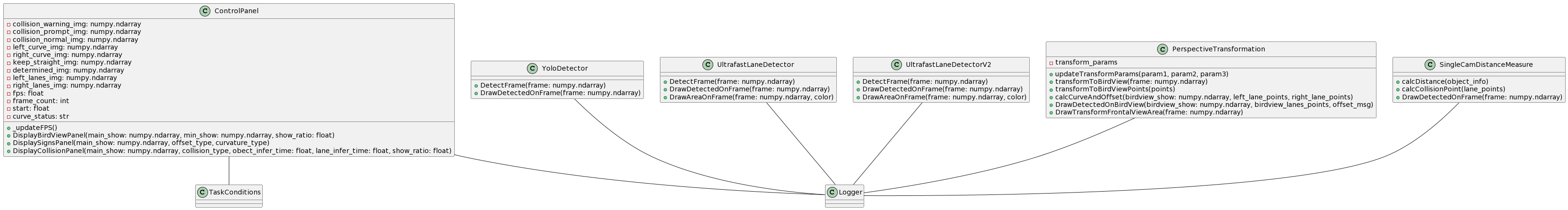

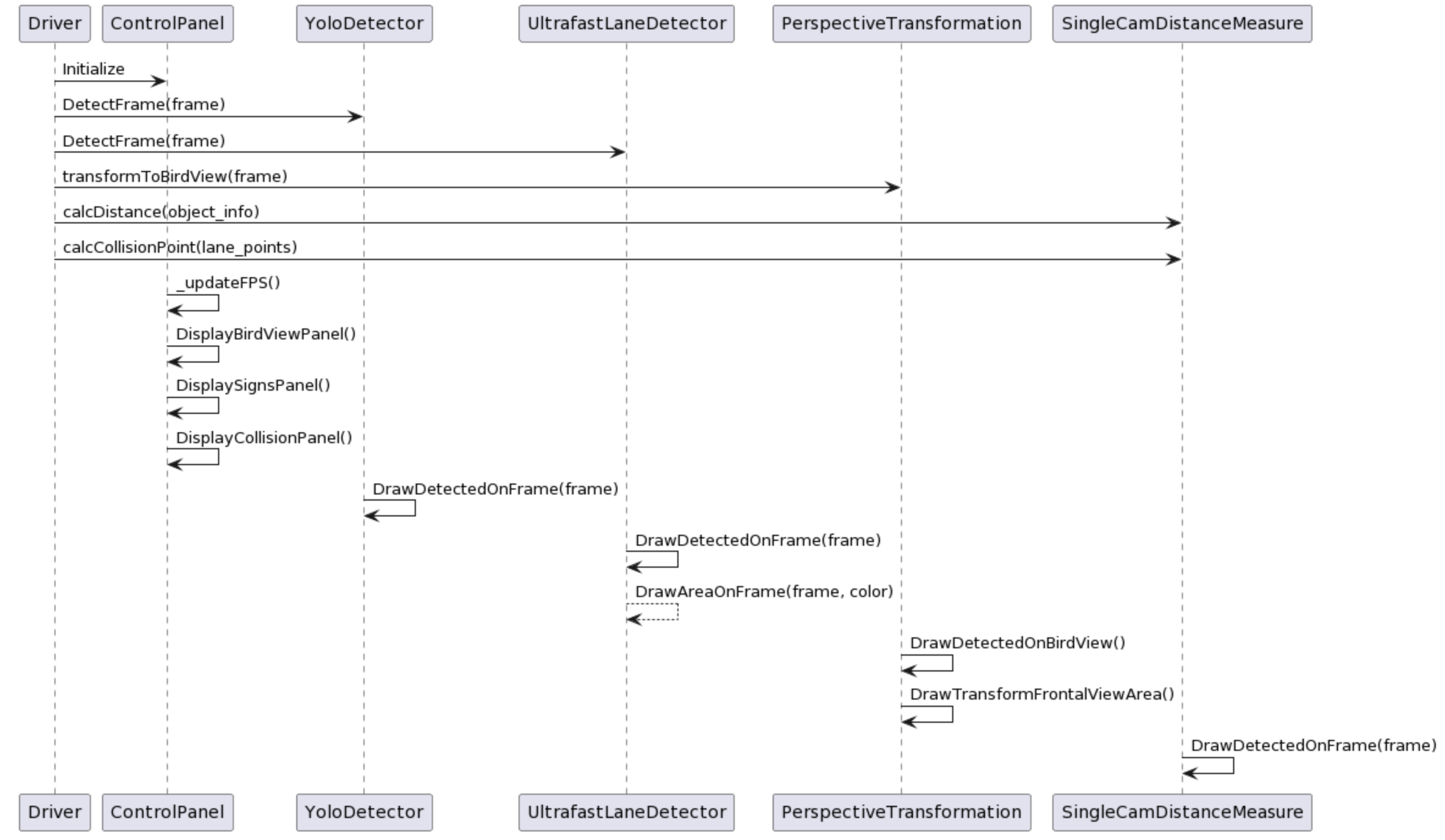

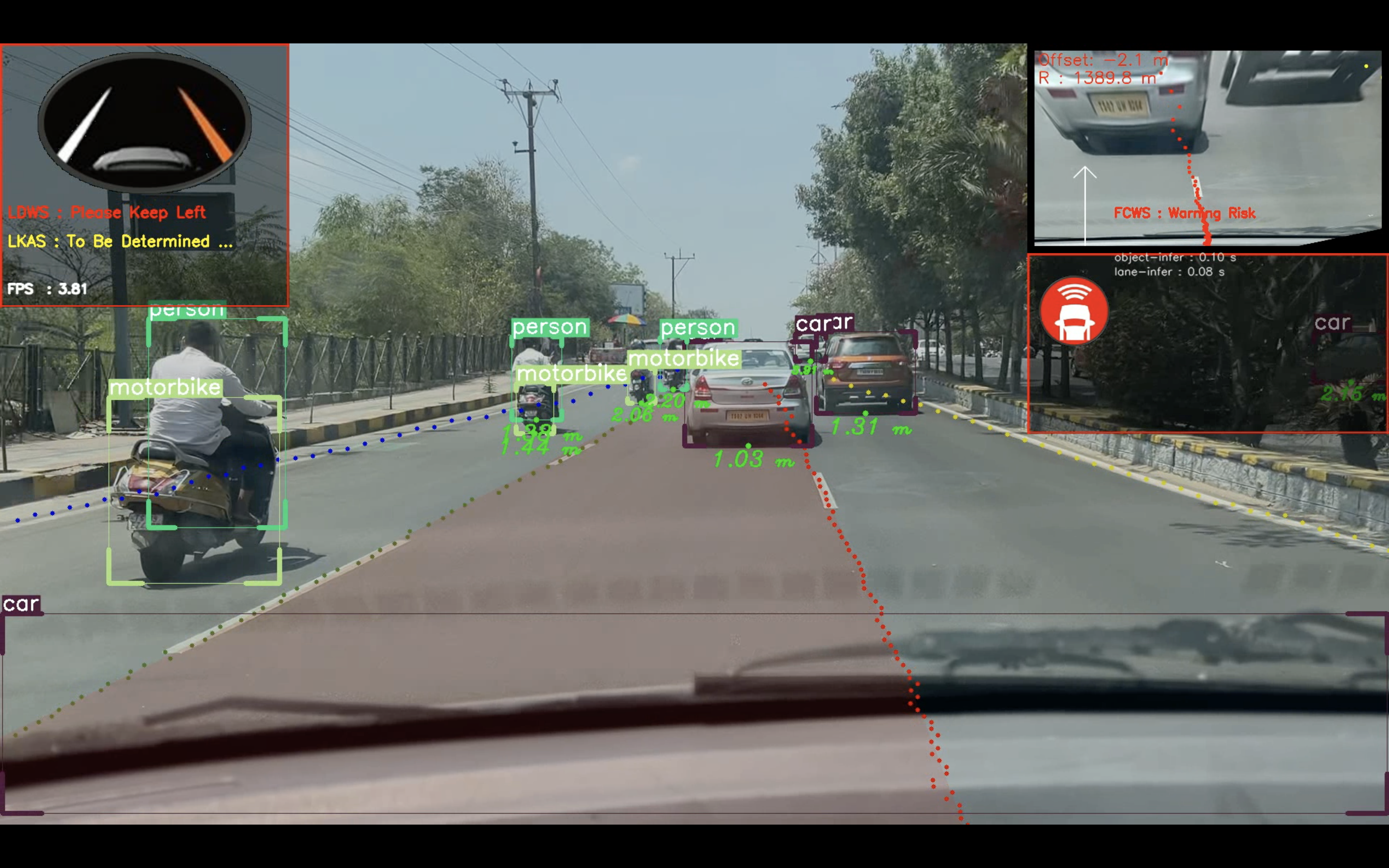

POV 1 — Monitoring the external environment

Handles lane tracking, object detection, and collision warning. Three UML diagrams define the class relationships, collaboration, and use-case flows.

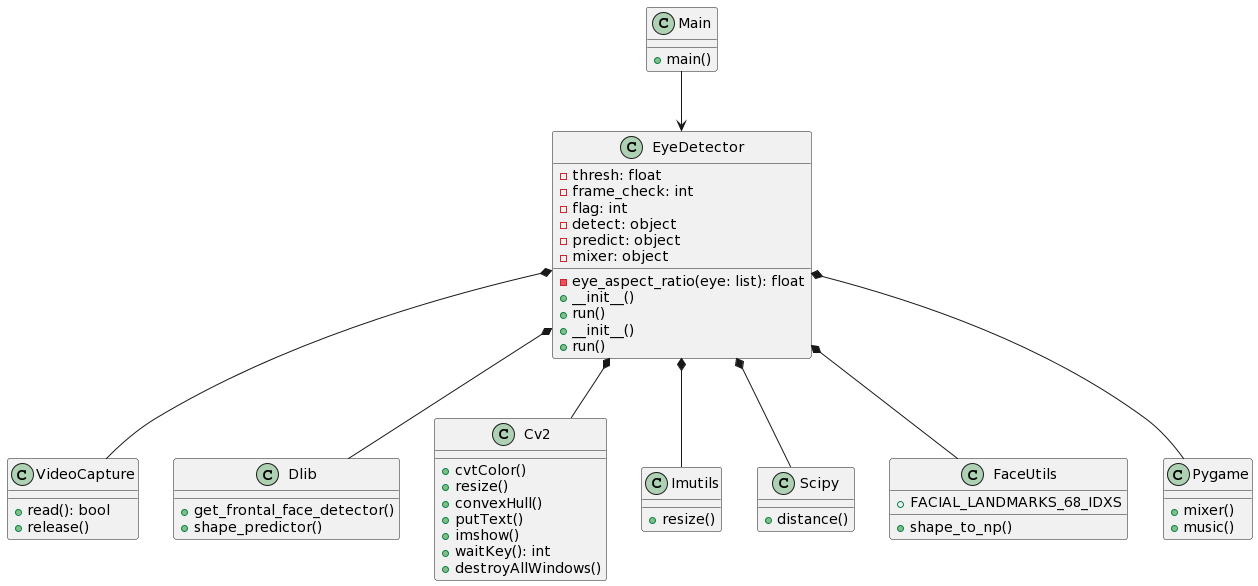

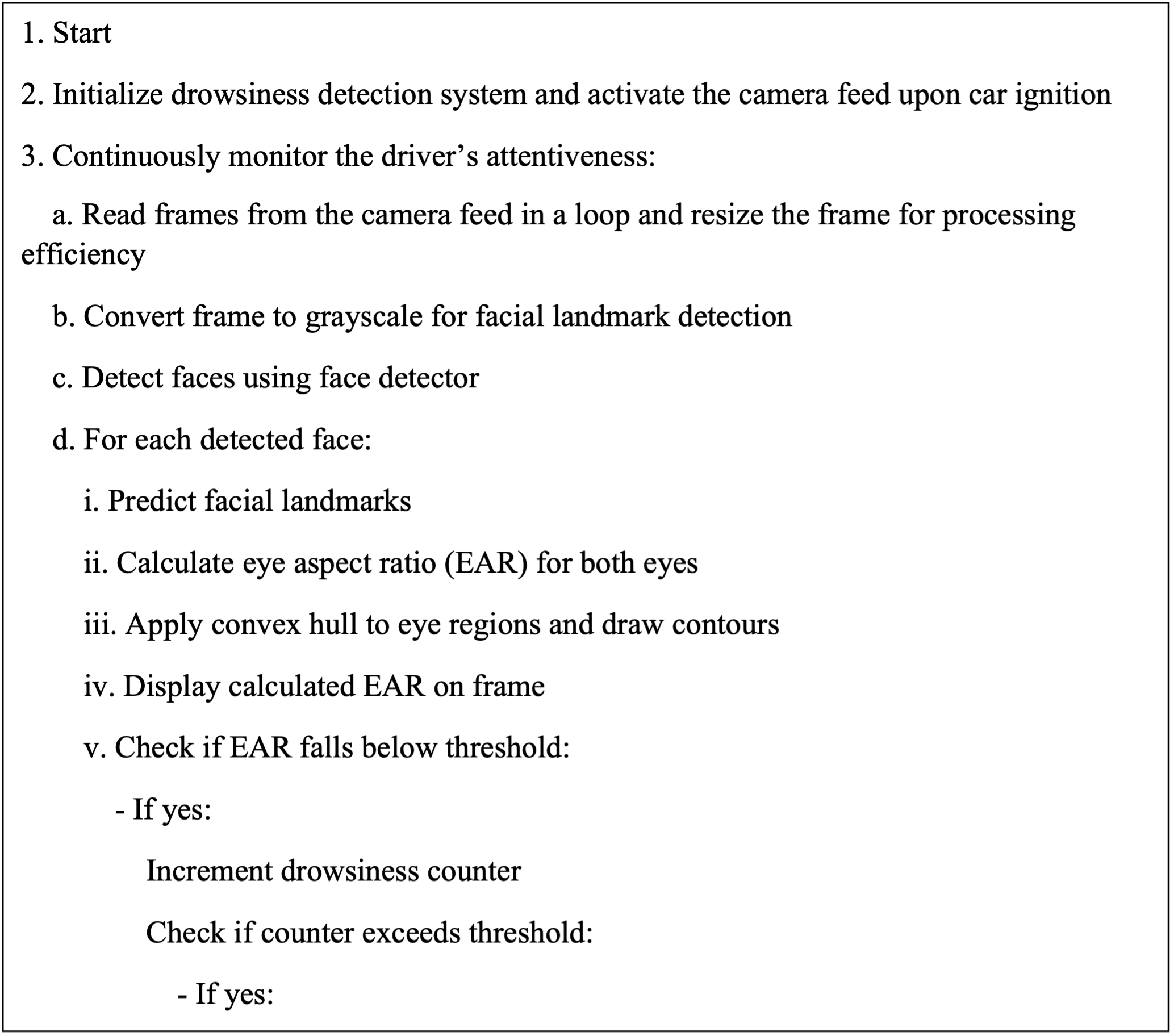

POV 2 — Monitoring the internal environment

Handles drowsiness detection using Eye Aspect Ratio (EAR). If EAR drops below a threshold for a sustained period, the system triggers an alert.

System in action

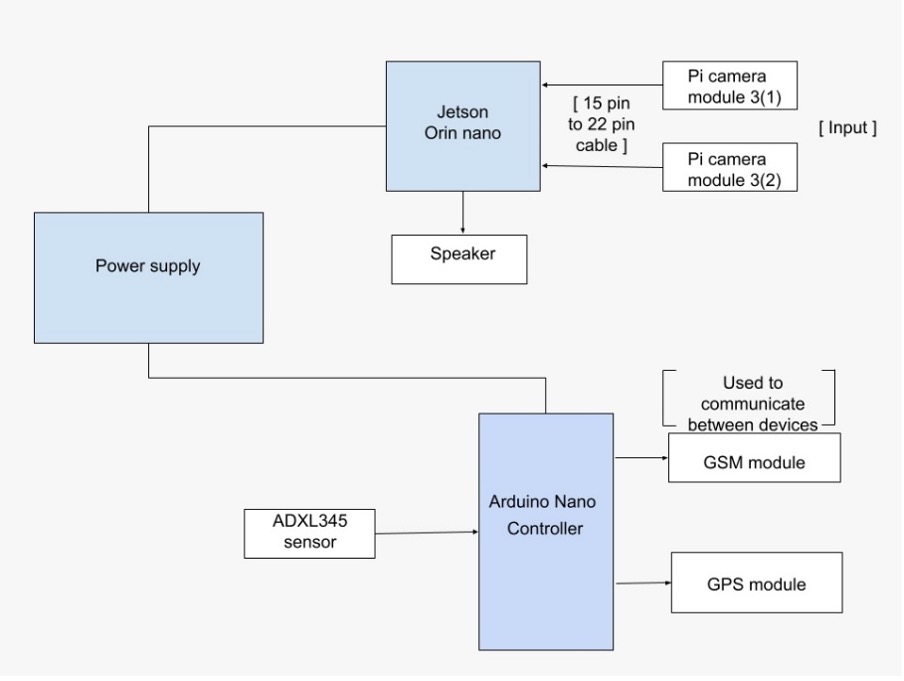

Jetson-powered edge deployment

Running the project

# Install dependencies

pip install -r requirements.txt

# Run POV_1 — external environment monitoring

python demo.py

# Run POV_2 — drowsiness detection (from Drowsiness detector dir)

python detect.pyYOLO model conversion

# Convert ONNX model to TensorRT for edge deployment

python convertOnnxToTensorRT.py -i <onnx-model> -o <trt-model>

# Quantize to float16 to reduce model size

python onnxQuantization.py -i <onnx-model>This project demonstrates that a comprehensive ADAS suite — normally found in premium vehicles — can be built and deployed on affordable edge hardware like the Jetson Orin Nano. The dual-POV architecture means the system simultaneously watches the road and the driver, providing layered protection that neither a human nor a single-camera system can match alone.